I watched “Page One: Inside The New York Times” on Netflix Sunday. It’s a documentary that focuses on the NYT as an institution in news reporting in the United States and the world, but also discusses the changing face of media (e.g. blogs, Twitter, etc.) and the ability of just about anyone to put out “unfiltered” news directly to the general public, as in the case of the WikiLeaks debacle from last year. The documentary is pretty interesting, though I think they “bounced around” a bit more than I would prefer without any good transitions.

One of the recurring themes in the documentary was the battle currently being waged between “Old Media” and “New Media.” For example, you can go to practically any news blog now for your news as many people do, but practically all of them just re-word and re-post the same information that was originally presented in the NYT. Thus, the regular consumer of news gets their information for free without every having to visit the NYT website or pick up a paper, and therefore, the NYT never gets any ad revenue or subscriber fees from the reader.

Which leads to the central question of the documentary: how long will this be sustainable? Or, re-worded, how long can the New York Times, and institutions like it, survive in a “digital world” using their traditional economic models?

I heard a related story on NPR last week talking about Amazon and Apple (but mostly Amazon) and how the European Union is investigating them for antitrust violations with regards to e-book prices on their respective stores. These two companies essentially dictate to the publisher how much money they will sell their books (typically around $10), while the publishing companies used to be able to charge quite a bit more than that for a hardcover new release (let alone the fact that they set the price, not the distributor).

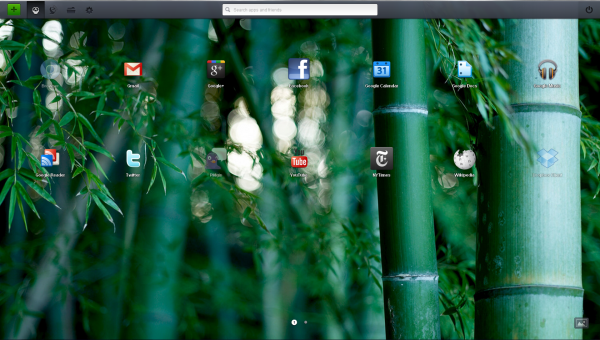

Now, in the case of the Times, I’m not really sure what the solution is. They have already taken steps to increase revenue by charging for their website, and I think that’s helping. At the very least, they’re making an attempt to survive the transition into digital media. Likely, as tablets broaden their reach to consumers, they will be able to charge for their app, or access to stories, effectively turning tablets into digital NYT readers. There is certainly money to be had if you produce a good app, and the NYT has a pretty decent one. It’s unfortunate that a lot of people out there don’t understand where news comes from and that most of these blogs a). don’t actually investigate their own news (they just re-post it from other sources), and b). frequently have some kind of agenda, so it may not be as objective as it should be to be considered capital-J “Journalism.” There is a value in actual news and people are willing to pay for it: the NYT just needs to figure out how to sustain the same standard of Journalism while operating under realistic expectations of what the public will pay for it.

In the case of book, movie, and music publishers, though, I think they need to adjust their model quickly. For example, if one considers a new-release book at Barnes and Noble, it’s likely it would cost you $20 or more. It simply doesn’t make sense to charge $20 for a digital copy (as publishers would love to do). The same thing goes for movies: I’m not going to spend the Bluray price of a movie for the digital version.

Now, those full prices don’t typically occur for movies and books because of the digital systems that have grown up to deliver the content for you. For a new movie like Rise of the Planet of the Apes, you’ll spend $22.99 for the Bluray and you’ll spend $14.99 for the digital version, so there’s some premium for the physical copy and some discount for the digital copy. In video games, this typically isn’t the case, however. When the newest Call of Duty game came out on PC, it was $60, regardless of whether you got a physical disc in a box or if you downloaded it. With games, though, there has been something of a “relationship” developed between the publishers of games and the retailers (e.g. Gamestop, Wal-Mart, etc), where the publisher could offer a discount on a digital version, but in order to appease the brick-and-mortar retailer, they keep it the same price so you still may go into their store.

Ultimately, “Old Media” needs to realize that they can’t support the distribution systems that they used for the past few decades. This is starting to happen with books, where locations like Borders went bankrupt because they couldn’t make the transition to a digital age. Companies like Gamestop are starting to make the transition, offering a digital streaming service not unlike Netflix Instant. Companies like Wal-Mart will probably just stop offering games and movies, eventually, but they’ll survive because they sell other things (among other reasons).

But the publishers still have much to worry about. Their teams of editors, binders, layout people, and so on and so forth. Teams of people that were needed in order to lay out print for publication or to set up distribution chains for each product. Or that were needed to design the inside of game manuals. Or to design the cases that your DVD or Bluray came in. These are all things that just aren’t (as) necessary in a fully digital world. You don’t need to worry about distribution when you can just sell it on the internet to everyone. However, publishers are still trying to charge additional money on the digital side in order to support these folks on the physical side of their product.

Now, my solution to this problem is to increase the cost of the physical media and further decrease the cost of the digital one. If there’s anything apps on the iPhone or Android have shown developers, it’s that selling your product for $1 means that you’ll sell to additional people, and you’ll make your money back on volume. I mean, if you could just buy a new release movie for $5, would you do it? Would you even think about the purchase? Would you care if you only watched it one time? That’s cheaper than a single ticket to go see the movie in theaters. If new movies, digitally distributed, without any special features were $5, I think they’d sell more.

But again, publishers should still hang on to their “physical media” production scheme, as there will still be people that want an actual Bluray disc. And I definitely know that there will be people that want a physical book, rather than an e-reader form. But wouldn’t more people buy books if they were $5 for a new one, rather than $20? Sure, pay the premium if you want a nice, hardcover, bound, indestructible copy of a book for your collection, but don’t make people that just want to read the book help finance other people’s need for a physical copy.

There’s a somewhat longstanding psychological “principle” in gaming related to the $100 price point. Once any gaming console hits $100, then many consumers won’t even think about the price. It’ll become an impulse buy. A similar phenomenon happened with the Wii when it released, and it cost $250. But at that price, it was cheap enough as an impulse buy for many people just to play Wii Sports.

“Old Media” publishers need to find the “impulse buy” price for their products. In the case of movies and books, I think $10 is a fair price to charge, but $5 is the “impulse buy” price. Once publishers start selling their wares down there for a digital form, I think they’ll make their money back on volume, and only then will they survive.

Edit: I read this article from Slate today, discussing Amazon and its tactics that end up hurting brick and mortar bookstores. I particularly liked this line:

But say you don’t care about local cultural experiences. Say you just care about books. Well, then it’s easy: The lower the price, the more books people will buy, and the more books people buy, the more they’ll read.

Yup.